Designing Structured Momentum in an AI Tutoring Platform

Ether

Defining interaction architecture, guardrails, and MVP scope for an AI-powered reflective assistant.

Role: Lead Product Designer

Scope: Interaction model, AI guidance system, MVP definition

Team: PM, 2 Engineers

The Problem:

Conversational AI Without Structure

Early testing showed users were willing to engage in reflective conversations with AI. Sessions were long, but outcomes were Inconsistent.

Open-ended chat created three risks:

• Users did not know how to extract value

• Conversations drifted without resolution

• System boundaries were unclear

Without structured guidance, the product risked becoming an interesting novelty rather than a reliable tool.

The challenge was to design an interaction model that balanced flexibility with clarity

Operating Constraints

• Early-stage AI product

• Undefined interaction paradigm

• Limited engineering capacity

• Sensitive user inputs

• Need to define MVP quickly

Given the ambiguity of conversational AI and the emotional sensitivity of the domain, structural clarity and guardrails were critical.

Research Insights That Shaped the Model

-

Users want emotional validation but also practical direction.

-

Blank journaling increases abandonment; guided prompts increase completion.

-

Visible progress reinforces continued engagement.

These insights suggested that free-form chat alone would not sustain long-term usage.

Methods: Qualitative User Interviews, Diary Study, Quantitative Survey Participants: 150 Diverse Gen Z

Exploration & Hypothesis Testing

Hypothesis: Structured conversational guidance would improve clarity and repeat usage over fully open-ended chat.

We tested:

-

Open chat with persona guides

-

Guided topic selection

-

Structured reflection loops

-

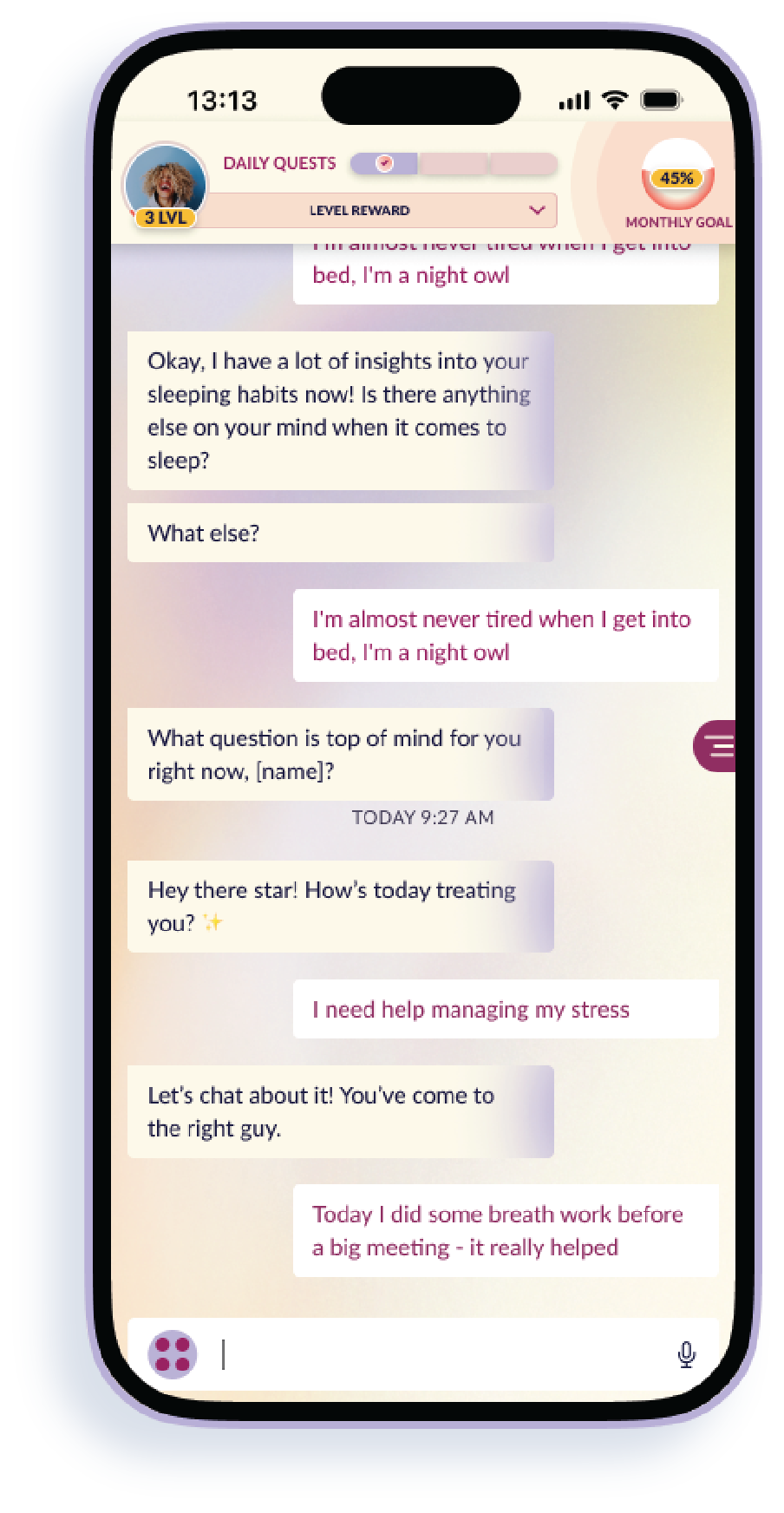

Micro-prompts and daily quests

Learning: Open chat increased message volume. Guided flows increased session completion and perceived usefulness.

Defining the Interaction Model

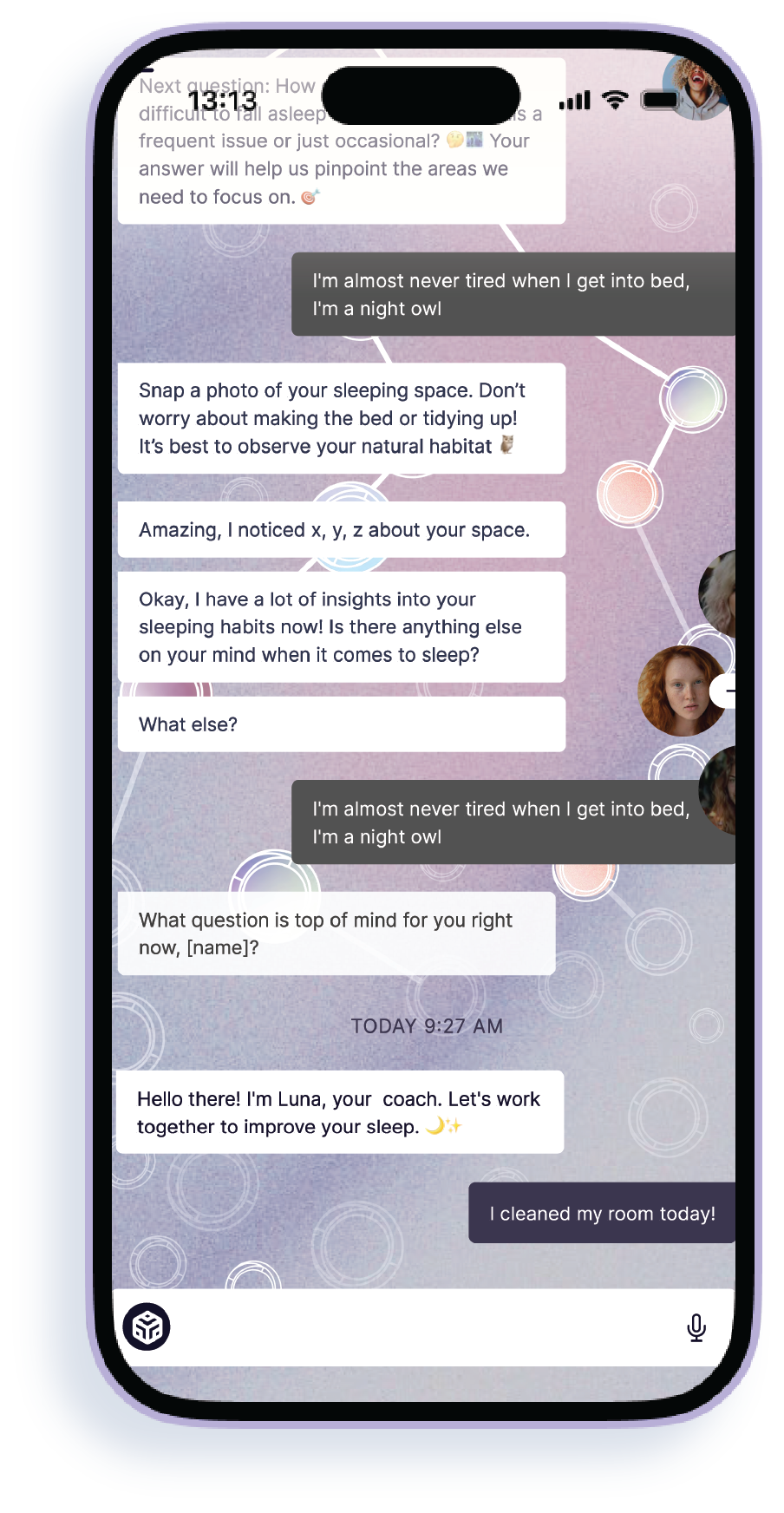

We shifted from persona-driven open chat to a hybrid model:

1. Topic-based entry points

2. Structured conversational loops

3. Optional freeform expansion

4. Clear session completion

5. Visible progress markers

We intentionally constrained conversational freedom to improve clarity, repeatability, and trust.

Designing for Boundaries

To prevent overreach and maintain trust, we:

• Avoided authoritative medical language

• Defined refusal/redirection patterns

• Limited personalization depth

• Reduced guide overload

• Clarified system limitations in UI

MVP Definition & Trade-offs

Shipped:

• Guided conversational flows

• Basic progress tracking

• Prompt library

• Subscription framework

• Minimal-effort entry paths

Deferred:

• Deep memory persistence

• Advanced branching logic

• Social layers

• Expanded gamification

Given engineering constraints and trust considerations, we prioritized structural clarity over experiential depth.

Aligning on Interaction Philosophy

Early stakeholder discussions revealed tension between:

• Open exploration

• Structured progression

Through facilitated workshops, we aligned on:

• Limiting guide count

• Introducing visible progression

• Defining completion states

This alignment reduced ambiguity in engineering implementation.

Outcomes:

-

Defined scalable interaction architecture

-

Reduced conversational drift Increased session completion during testing

-

Established guardrails for safe AI guidance

-

Clarified MVP for engineering handoff

Key Learnings:

-

AI personality must balance ability with neutrality.

-

Structured prompts outperform blank-slate journaling.

-

Guardrails must be defined before personality layering.

-

Interaction clarity is more valuable than novelty.

Role: Lead Product Designer

Scope: Interaction model, AI guidance system, MVP definition

Team: PM, 2 Engineers